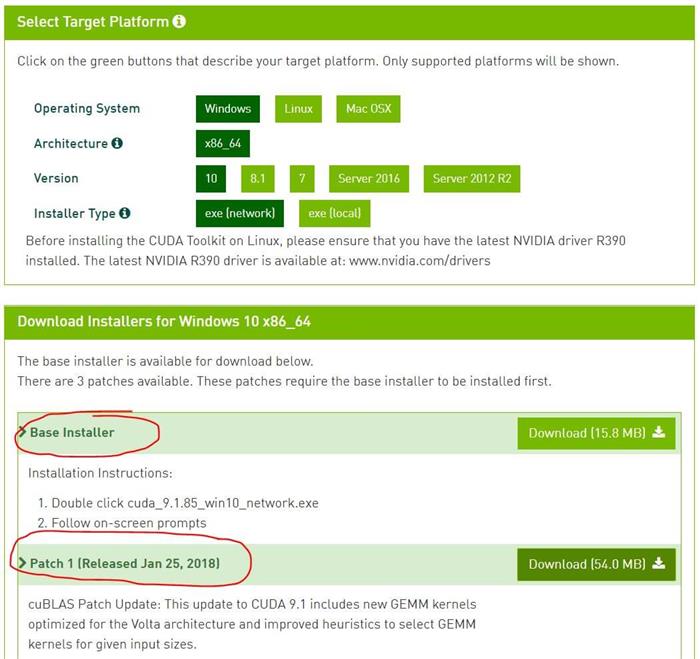

Added 7_CUDALibraries/cannyEdgeDetectorNPP.Demonstrates how any border version of an NPP filtering function can be used in the most common mode (with border controlĮnabled), can be used to duplicate the results of the equivalent non-border version of the NPP function, and can be used toĮnable and disable border control on various source image edges depending on what portion of the source image is being used Added 7_CUDALibraries/FilterBorderControlNPP.Updated 6_Advanced/shfl_scan to use newly added *_sync equivalent of the shfl intrinsics.Updated 0_Simple/simpleVoteIntrinsics to use newly added *_sync equivalent of the vote intrinsics _any, _all.The new Tensor Cores introduced in the Volta chip family. Demonstrates a GEMM computation using the Warp Matrix Multiply and Accumulate (WMMA) API introduced in CUDA 9, as well as Illustrates basic usage of Cooperative Groups within the thread block. Added 0_Simple/simpleCooperativeGroups.Added Cooperative Groups(CG) support to several samples notable ones to name are 6_Advanced/cdpQuadtree, 6_Advanced/cdpAdvancedQuicksort, 6_Advanced/threadFenceReduction, 3_Imaging/dxtc, 4_Finance/MonteCarloMultiGPU, 0_Simple/matrixMul_nvrtc.Demonstrates a conjugate gradient solver on GPU using Multi Block Cooperative Groups. Added 6_Advanced/conjugateGradientMultiBlockCG.Demonstrates single pass reduction using Multi Block Cooperative Groups. Added 6_Advanced/reductionMultiBlockCG.Demonstrates warp aggregated atomics using Cooperative Groups. Added 6_Advanced/warpAggregatedAtomicsCG.Demonstrates Spectral Clustering using NVGRAPH Library. Added 7_CUDALibraries/nvgraph_SpectralClustering.Added windows support to 6_Advanced/c++11_cuda.Added two new reduction kernels in 6_Advanced/reduction one which demonstrates reduce_add_sync intrinstic supported on compute capability 8.0 and another which uses cooperative_groups::reduceįunction which does thread_block_tile level reduction introduced from CUDA 11.0.Removed 7_CUDALibraries/nvgraph_Pagerank, 7_CUDALibraries/nvgraph_SemiRingSpMV, 7_CUDALibraries/nvgraph_SpectralClustering, 7_CUDALibraries/nvgraph_SSSP as the NVGRAPH library is dropped from CUDA Toolkit 11.0.Demonstrates compressible memory allocation using cuMemMap API. Added 6_Advanced/cudaCompressibleMemory.Demonstrates binary_partition cooperative groups creation and usage in divergent path. Added warp aggregated atomic multi bucket increments kernel using labeled_partition cooperative groups in 6_Advanced/warpAggregatedAtomicsCG which can be used on compute capability 7.0 and above GPU architectures.Also makes use of asynchronousĬopy from global to shared memory using cuda pipeline which leads to further performance gain. Demonstrates tf32 (e8m10) GEMM computation using the WMMA API for tf32 employing the Tensor Cores. Demonstrates _nv_bfloat16 (e8m7) GEMM computation using the WMMA API for _nv_bfloat16 employing the Tensor Cores. Makes use of asynchronous copy from global to shared memory using cuda pipeline which leads to further performance gain. Demonstrates double precision GEMM computation using the WMMA API for double precision employing the Tensor Cores. Demonstrates the stream attributes that affect L2 locality. Demonstrates asynchronous copy of data from global to shared memory using cuda pipeline. Added 0_Simple/globalToShmemAsyncCopy.Q: Is cuDNN included as part of the CUDA Toolkit?Ī: cuDNN is our library for Deep Learning frameworks, and can be downloaded separately from the cuDNN home page. Q: Where can I find old versions of the CUDA Toolkit?Ī: Older versions of the toolkit can be found on the Legacy CUDA Toolkits page. If you only want to install the GDK, then you should use the network installer, for efficiency. Q: Where do I get the GPU Deployment Kit (GDK) for Windows?Ī: The installers give you an option to install the GDK. The Network Installer is a small executable that will only download the necessary components dynamically during the installation so an internet connection is required. This makes the installer very large, but once downloaded, it can be installed without an internet connection. Q: What is the difference between the Network Installer and the Local Installer?Ī: The Local Installer has all of the components embedded into it (toolkit, driver, samples). Beginning with CUDA 7.0, these packages have been merged into a single package that is capable of installing on all supported platforms. A: Previous releases of the CUDA Toolkit had separate installation packages for notebook and desktop systems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed